Benchmarking Compositionality with Formal Languages

Abstract

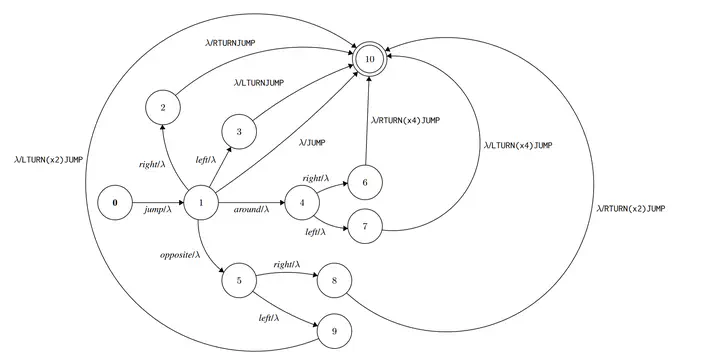

Recombining known primitive concepts into larger novel combinations is a quintessentially human cognitive capability. Whether large neural models in NLP can acquire this ability while learning from data is an open question. In this paper, we investigate this problem from the perspective of formal languages. We use deterministic finite-state transducers to make an unbounded number of datasets with controllable properties governing compositionality. By randomly sampling over many transducers, we explore which of their properties contribute to learnability of a compositional relation by a neural network. We find that the models either learn the relations completely or not at all. The key is transition coverage, setting a soft learnability limit at 400 examples per transition.